Dp 443 en

Stochastic model predictive control

Author: Korda Milan

The setting of this thesis is stochastic optimal control and constrained model predictive control of discrete-time linear systems with additive noise. There are three primary topics, all of which lie at the heart of traditional model predictive control theory but still lack adequate stochastic counterparts.

First, the finite horizon stochastic optimal control problem is approached; the expectation of the p-norm as the objective function and jointly Gaussian, although not necessarily independent, disturbances are considered. We develop an approximation strategy that solves the problem in a certain class of nonlinear feedback policies for perfect as well as imperfect state information while ensuring satisfaction of hard input constraints. A bound on suboptimality of the proposed strategy in the class of nonlinear feedback policies is given.

Second, the question of mean-square stabilizability of stochastic linear systems with bounded control authority is addressed. We provide simplified proofs of existing results on stabilizability of strictly and marginally stable systems, and extend the technique employed to show the stability of positive (or negative) parts of the state of marginally unstable systems provided that the control authority is nonzero, but possibly arbitrarily small. We also prove the existence of a mean-square stabilizing Markov policy for marginally stable systems.

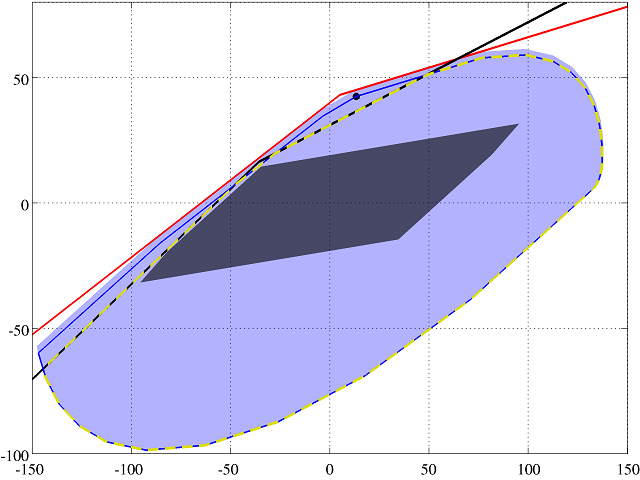

Finally, we develop a systematic approach to ensure strong feasibility of stochastic model predictive control problems under affine as well as nonlinear disturbance feedback policies. Two distinct approaches are presented, both of which capitalize on and extend the machinery of (controlled) invariant sets to a stochastic environment. The first approach employs an invariant set as a terminal constraint, whereas the second one constrains the first predicted state. Consequently, the second approach turns out to be completely independent of the policy in question, and moreover it produces the largest feasible set amongst all admissible policies. As a result, a trade-off between computational complexity and performance can be found without compromising feasibility properties.

- Milan Korda, tel: +420 608 814 011, mailto:korda.m@gmail.com

- Ing. Jiří Cigler, tel: +420 +420 224 357 687, mailto:jiri.cigler@fel.cvut.cz